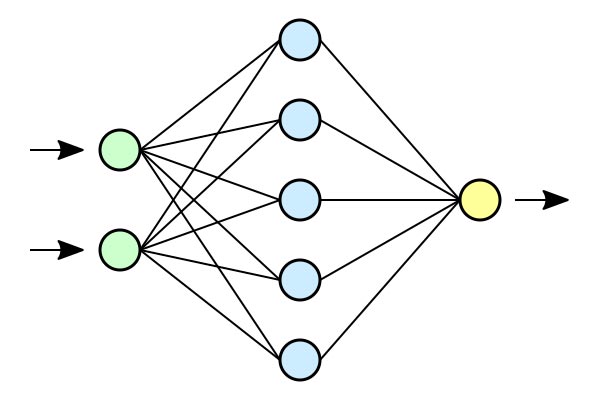

Artificial neural networks are computer software systems that, like the human brain, process information, recognize patterns and learn. The modern world is increasingly reliant on such systems, which include everything from weather prediction models to facial recognition technology.

These complex networks are difficult to dissect and interpret, which can make them targets for cyberattacks. CSU computer scientists and mathematicians are seeking to bring mathematics-based clarity to how artificial neural networks function, and how they can be better protected against security threats. Their $1 million research project is supported by the Defense Advanced Research Projects Agency, which is the U.S. Department of Defense’s research arm that makes investments in “breakthrough technologies for national security.”

The team is led by Michael Kirby, professor in the Department of Mathematics, and includes experts from both math and computer science.

Artificial neural networks, while complex, can be explained and simplified by looking at them not as a black box function to fit data, but as geometric objects with lower-dimensional sub-spaces that represent the local geometry of that data. Defining networks as shapes while exploring their fundamental mathematical foundations could help researchers make them more explainable, trustworthy and secure.

“There is this idea, that there exists geometric structure in data, and that you can exploit this geometric structure to better understand information,” Kirby said.

Ultimately, the team is aiming to use insights into what they call the “geometry of learning” to understand how attacks on the weaknesses of a trained machine learning algorithm can happen – something called adversarial AI – and what tools and methods could better defend against such attacks. In the case of deep learning with images, an adversarial attack will adjust the pixel values of images so the image appears normal to a viewer, but it is actually misclassified – say, from a baseball to a polar bear.

“We’re hoping to use insights into the geometry of the learning space to both understand how adversarial AI emerges and identify ways to defend against adversarial attacks,” said Nathaniel Blanchard, assistant professor in the Department of Computer Science and co-principal investigator on the project. He added, “The work is really a return to understanding basic principles of neural networks from a mathematical perspective, and then ensuring the insights we gain map to practical application.”

Other investigators on the grant are Emily King and Chris Peterson in mathematics, and Yajing Liu in electrical and computer engineering.