Envision the future of classrooms in America, where technology and human instruction intermingle to create the best possible educational environment. Students work with technology and their peers, aided by a classroom teacher and an AI partner.

That’s the vision of the new $20 million research collaboration funded by the National Science Foundation called the AI Institute for Student-AI Teaming. Colorado State University will work with other universities, to expand how AI is used in K-12 education.

“With growing classroom sizes and online learning, it becomes increasingly difficult for teachers to offer individualized instruction,” wrote the NSF in announcing the new institutes. “This new AI institute will focus research on developing ‘AI partners’ that will facilitate collaborative learning in classrooms by interacting naturally through speech, gesture, gaze, and facial expression in classrooms.”

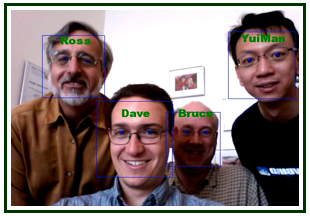

Ross Beveridge, a professor in the Department of Computer Science at CSU, is one of four co-principal investigators on the project led by the University of Colorado Boulder. CSU will play a key role in enabling the in-classroom AI partner to see and make sense of what it is seeing.

Ross Beveridge, a professor in the Department of Computer Science at CSU, is one of four co-principal investigators on the project led by the University of Colorado Boulder. CSU will play a key role in enabling the in-classroom AI partner to see and make sense of what it is seeing.

Beveridge founded the computer vision research group at CSU in 1993, which has most recently focused on computer vision in the context of AI agents.

The AI Institute for Student-AI Teaming is one of five new institutes announced on Aug. 25; CSU is playing a critical role in two of these institutes. Collaborating with team leader Research Professor Imme Ebert-Uphoff, Professor Chuck Anderson, also with the Department of Computer Science, is a senior researcher with the Trustworthy AI in Weather, Climate and Coastal Oceanography Institute.

Computer vision and multimodal AI agents

While there are hundreds of experts working on this classroom project, Beveridge’s focus is on bringing sight capabilities to AI partners. An effective AI partner in the classroom must be able to “autonomously sense, model, and facilitate collaborative learning, and [use] new algorithms that integrate speech and non-verbal signals to achieve deeper understanding of social interactions while learning,” according to the NSF announcement.

“If you are doing a physics exercise in school with a set of items on a table, a blind agent isn’t going to be a very good partner in learning,” said Beveridge. “Sight is important. I can’t ask a blind partner, ‘Hey partner, you’re here to help, what happens if this hits that?’ In order to ask questions such as ‘what happens if this hits that,’ you need tight integration of sight and speech.”

Beveridge and the computer vision group at CSU are well positioned to help with vision for AI partners. The team, along with close collaborator James Pustejovsky at Brandeis University, have created a multimodal embodied avatar system – known as the Diana System – that can listen, see, and interpret both senses at once. Diana integrates computer vision and natural language processing so that the two work seamlessly.

Brandeis is also part of the new student-AI teaming institute, where the team will have the opportunity to expand and adapt their work to meet the needs of K-12 education.

Importance of sight in AI

To glimpse how revolutionary an agent with sight can be, consider current agents familiar to many people, like Siri or Alexa. Professor Beveridge made this point by asking, “Hey Siri, what am I pointing at?”

Her response? “I’m not sure I understand.”

Her response? “I’m not sure I understand.”

Development of a sighted AI for use in classrooms will be no small feat, Beveridge said.

Beveridge added that he is proud to be a part of such an outstanding computer science program participating in this new initiative, and is excited to help innovate the future of K-12 education.

“The NSF has a long history of creating revolutionary centers, but there aren’t that many of them, so they are a big deal,” he said. “It’s impressive that between Chuck Anderson and I we have managed to land CSU in two of these new groups. Let’s be honest, that’s pretty boast-able.”

The Department of Computer Science is a part of the CSU College of Natural Sciences.